[ PRIVACY ]

· 7 min read

The privacy-first company that changed its mind

One major AI company built its brand on being the responsible choice. Then it quietly reversed its privacy commitments and started training on user data.

The responsible AI brand

Anthropic positioned itself from the start as the safety-focused, privacy-respecting alternative to OpenAI. Its founders left OpenAI explicitly over concerns about safety culture, and the company's marketing emphasized responsible development, careful deployment, and respect for user data. Privacy-conscious users chose Anthropic's Claude specifically because the company said it would not train on conversations. For many, it was the deciding factor in choosing one AI assistant over another.

The August 2025 policy reversal

In August 2025, Anthropic updated its terms of service to enable training on user conversations by default. The change was framed as necessary for model improvement and was accompanied by a blog post emphasizing the quality benefits of learning from real interactions. What the blog post did not emphasize was the fundamental shift in the privacy contract. Users who had chosen Anthropic specifically because it did not train on their data woke up to discover that it now did, unless they found and toggled an opt-out setting.

Five years of your conversations

The updated privacy policy introduced a five-year data retention period for user conversations. Five years is not a technical necessity. It is a business decision that maximizes the value of collected data across multiple model training cycles. Over five years, a user's conversation history becomes a comprehensive record of their evolving thoughts, concerns, interests, and vulnerabilities. The retention period was set not by what users would want but by what would be most useful for the company's training pipeline.

Opt-out versus opt-in

The distinction between opt-out and opt-in is not a technicality. It is the difference between a system designed to respect privacy and a system designed to maximize data collection. Opt-in means users actively choose to share their data, with full knowledge of what that means. Opt-out means users share their data by default, and the company bets that most people will never find the setting, never understand the implications, or never bother to change it. Anthropic chose opt-out because opt-out collects more data. The decision tells you everything about priorities.

How quickly trust evaporates

Trust in a technology company is built over years of consistent behavior and destroyed in a single policy update. Users who recommended Anthropic to friends, colleagues, and clients based on its privacy commitments found themselves in an uncomfortable position. The company they vouched for had changed the terms of the relationship without meaningful consultation. Once a company demonstrates that its privacy commitments are contingent on business conditions, every future privacy claim is received with justified skepticism. Trust, once broken on a matter this fundamental, does not recover.

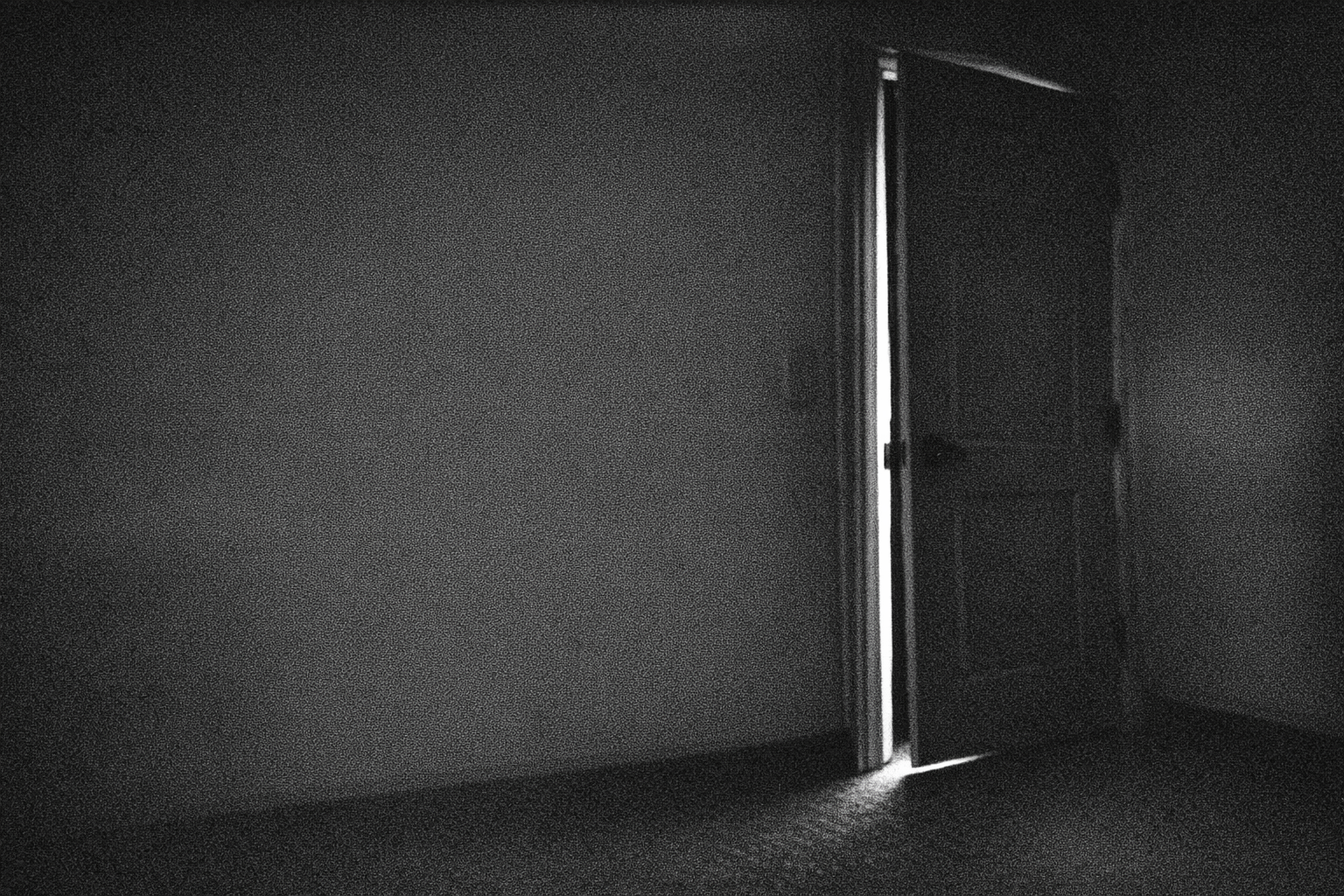

Privacy enforced by architecture, not policy

SecureGPT's privacy commitments are not enforced by policy. They are enforced by architecture. Your messages are encrypted before they leave your device. The server processes the request and discards the content. There is no database to retain, no training pipeline to feed, and no business reason to change this because the system literally cannot access your conversations in a storable form. A privacy policy is a promise. Encryption is a guarantee. When you have to choose between the two, the choice should be obvious.